General

-

Scale AI Faces Third Worker Lawsuit Alleging Psychological Harm

Scale AI, a company valued at $13.8 billion last year, is facing its third lawsuit in just over a month, with the latest allegations highlighting claims of psychological trauma suffered by workers reviewing disturbing content…

-

MetAI Secures Nvidia Investment for AI-Powered Digital Twin Technology

Nvidia has made its first investment in a Taiwanese startup, backing MetAI, which specializes in creating AI-powered digital twins. MetAI secured $4 million in its seed round, attracting funding from a mix of strategic and…

-

Blaize Becomes First AI Chip Startup to Go Public in 2025

Investor interest in AI chip startups has surged, driven by the meteoric rise of Nvidia. Among the emerging players, Blaize, an AI chip manufacturer founded by former Intel engineers, announced it will go public via…

-

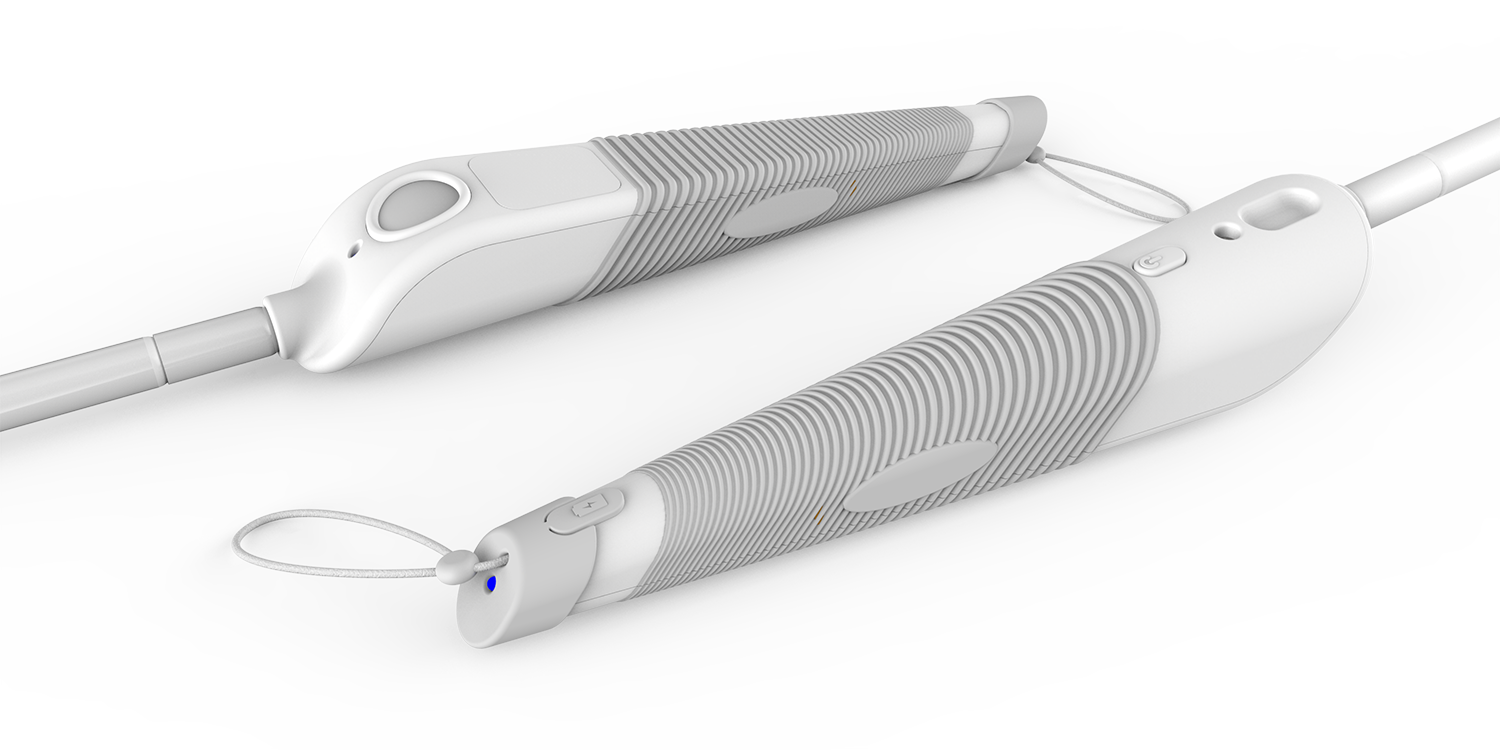

Startups Redesign Canes to Improve Accessibility for the Blind

While much of the tech industry has focused on enhancing accessibility for the blind and visually impaired, the traditional white cane has remained largely unchanged. However, a new wave of startups is aiming to modernize…

-

Former Scale AI Worker Sues Over Pay and Misclassification

Scale AI, valued at $13.8 billion, faces growing legal scrutiny over its labor practices. The company, which relies on contractors to perform tasks like image labeling and rating large language model (LLM) responses, has been…