Pruna AI, a European startup specializing in AI model compression algorithms, has announced the open-sourcing of its AI model optimization framework. This launch, scheduled for Thursday, aims to provide enterprises with advanced optimization features, including an innovative optimization agent that offers significant performance improvements for a variety of AI models.

The company has successfully demonstrated its capability by compressing a Llama model to one-eighth of its original size, achieving this without substantial loss in quality. Pruna AI's framework supports a broad spectrum of models—from large language models (LLMs) and diffusion models to speech-to-text and computer vision models. Currently, the startup is focusing more specifically on image and video generation models, aligning with industry trends demanding efficient processing of visual data.

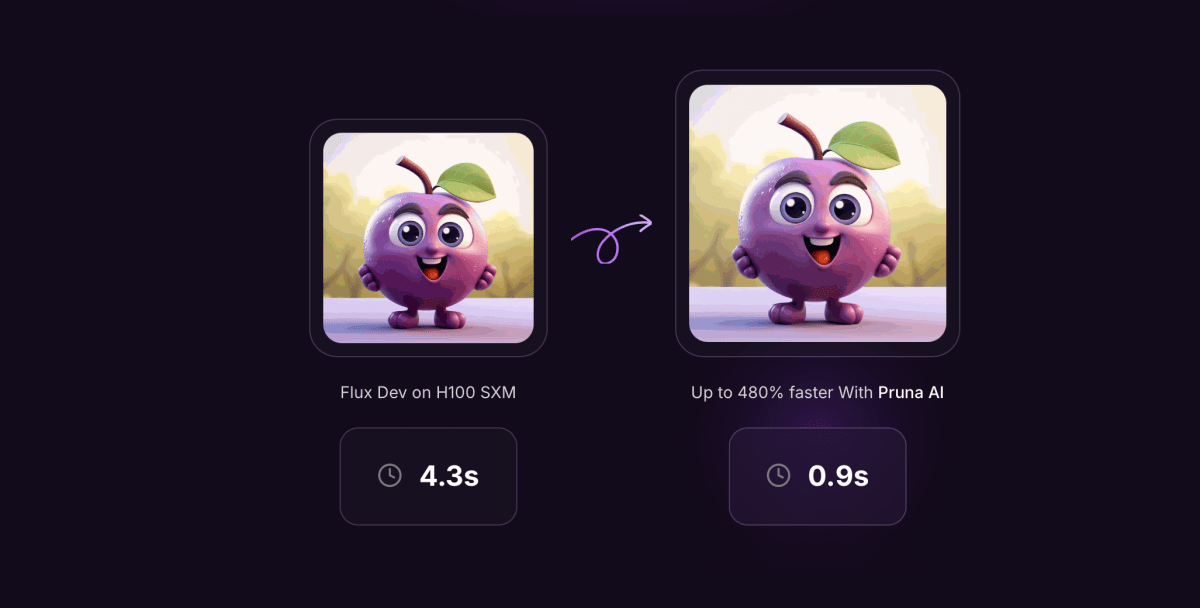

Pruna AI’s framework is designed to evaluate the quality loss associated with model compression, as well as the performance gains achieved in the process. This dual approach not only enhances the effectiveness of AI applications but also ensures that users are aware of the trade-offs involved. The framework employs various efficiency methods such as caching, pruning, quantization, and distillation to optimize AI models comprehensively.

John Rachwan, co-founder and CTO of Pruna AI, emphasized the unique value proposition of their offering. He stated, “For big companies, what they usually do is that they build this stuff in-house. And what you can find in the open source world is usually based on single methods. For example, let’s say one quantization method for LLMs, or one caching method for diffusion models.”

He further elaborated on the challenges faced by organizations looking for optimization tools, noting, “But you cannot find a tool that aggregates all of them, makes them all easy to use and combine together. And this is the big value that Pruna is bringing right now.” Rachwan also pointed out their commitment to standardizing the processes involved in saving and loading compressed models while applying various compression methods effectively.

In addition to its open-source framework, Pruna AI has introduced a pro version that charges users by the hour. The startup recently secured $6.5 million in seed funding from investors including EQT Ventures, Daphni, Motier Ventures, and Kima Ventures. Current users of Pruna AI’s technology include notable companies such as Scenario and PhotoRoom.

Pruna AI aims to position its compression framework as an investment that pays for itself in operational efficiency. Rachwan likened the service to renting a GPU on cloud platforms like AWS, stating, “It’s similar to how you would think of a GPU when you rent a GPU on AWS or any cloud service.”

Looking ahead, Pruna AI is set to release a compression agent soon, which will further enhance its offerings in model optimization. The company’s holistic approach to model compression could revolutionize how enterprises utilize AI technology while maintaining high-quality outputs.

Featured image courtesy of TechCrunch

Leave a Reply